Project Description

Can a machine distinguish between a honey bee and a bumble bee? Being able to identify bee species from images, while challenging, would allow researchers to more quickly and effectively collect field data. In this project, you will use the Python image library Pillow to load and manipulate image data, then build a model to identify honey bees and bumble bees given an image of these insects.

This project is the second part of a series of projects that walk through working with image data, building classifiers using traditional techniques, and leveraging the power of deep learning for computer vision.

1. Import Python libraries

A honey bee (Apis).

A honey bee (Apis).

Can a machine identify a bee as a honey bee or a bumble bee? These bees have different behaviors and appearances, but given the variety of backgrounds, positions, and image resolutions, it can be a challenge for machines to tell them apart.

Being able to identify bee species from images is a task that ultimately would allow researchers to more quickly and effectively collect field data. Pollinating bees have critical roles in both ecology and agriculture, and diseases like colony collapse disorder threaten these species. Identifying different species of bees in the wild means that we can better understand the prevalence and growth of these important insects.

A bumble bee (Bombus).

A bumble bee (Bombus).

After loading and pre-processing images, this notebook walks through building a model that can automatically detect honey bees and bumble bees.

# used to change filepaths

import os

import matplotlib as mpl

import matplotlib.pyplot as plt

from IPython.display import display

%matplotlib inline

import pandas as pd

import numpy as np

# import Image from PIL

from PIL import Image

from skimage.feature import hog

from skimage.color import rgb2gray

from sklearn.preprocessing import StandardScaler

from sklearn.decomposition import PCA

# import train_test_split from sklearn's model selection module

from sklearn.model_selection import train_test_split

# import SVC from sklearn's svm module

from sklearn.svm import SVC

# import accuracy_score from sklearn's metrics module

from sklearn.metrics import roc_curve, auc, accuracy_score2. Display image of each bee type

Now that we have all of our imports ready, it is time to look at some images. We will load our labels.csv file into a dataframe called labels, where the index is the image name (e.g. an index of 1036 refers to an image named 1036.jpg) and the genus column tells us the bee type. genus takes the value of either 0.0 (Apis or honey bee) or 1.0 (Bombus or bumble bee).

The function get_image converts an index value from the dataframe into a file path where the image is located, opens the image using the Image object in Pillow, and then returns the image as a numpy array.

We'll use this function to load the sixth Apis image and then the sixth Bombus image in the dataframe.

# load the labels using pandas

labels = pd.read_csv("data/labels.csv", index_col=0)

# show the first five rows of the dataframe using head

display(labels.head(5))

def get_image(row_id, root="data/"):

"""

Converts an image number into the file path where the image is located,

opens the image, and returns the image as a numpy array.

"""

filename = "{}.jpg".format(row_id)

file_path = os.path.join(root, filename)

img = Image.open(file_path)

return np.array(img)

# subset the dataframe to just Apis (genus is 0.0) get the value of the sixth item in the index

apis_row = labels[labels.genus == 0.0].index[5]

# show the corresponding image of an Apis

plt.imshow(get_image(apis_row))

plt.show()

# subset the dataframe to just Bombus (genus is 1.0) get the value of the sixth item in the index

bombus_row = labels[labels['genus']==1.0].index[5]

# show the corresponding image of a Bombus

plt.imshow(get_image(bombus_row))

plt.show()3. Image manipulation with rgb2gray

scikit-image has a number of image processing functions built into the library, for example, converting an image to grayscale. The rgb2gray function computes the luminance of an RGB image using the following formula Y = 0.2125 R + 0.7154 G + 0.0721 B.

Image data is represented as a matrix, where the depth is the number of channels. An RGB image has three channels (red, green, and blue) whereas the returned grayscale image has only one channel. Accordingly, the original color image has the dimensions 100x100x3 but after calling rgb2gray, the resulting grayscale image has only one channel, making the dimensions 100x100x1.

# load a bombus image using our get_image function and bombus_row from the previous cell

bombus = get_image(bombus_row)

# print the shape of the bombus image

print('Color bombus image has shape: ', bombus.shape)

# convert the bombus image to grayscale

gray_bombus = rgb2gray(bombus)

# show the grayscale image

plt.imshow(gray_bombus, cmap=mpl.cm.gray)

# grayscale bombus image only has one channel

print('Grayscale bombus image has shape: ', gray_bombus.shape)4. Histogram of oriented gradients

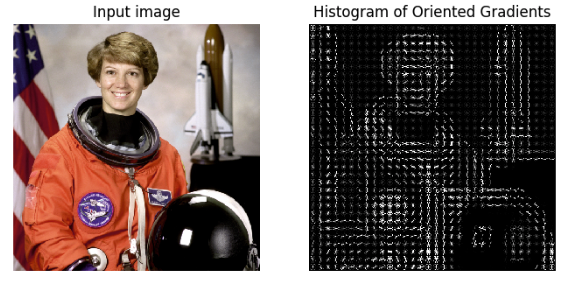

Now we need to turn these images into something that a machine learning algorithm can understand. Traditional computer vision techniques have relied on mathematical transforms to turn images into useful features. For example, you may want to detect edges of objects in an image, increase the contrast, or filter out particular colors.

We've got a matrix of pixel values, but those don't contain enough interesting information on their own for most algorithms. We need to help the algorithms along by picking out some of the salient features for them using the histogram of oriented gradients (HOG) descriptor. The idea behind HOG is that an object's shape within an image can be inferred by its edges, and a way to identify edges is by looking at the direction of intensity gradients (i.e. changes in luminescence).

An image is divided in a grid fashion into cells, and for the pixels within each cell, a histogram of gradient directions is compiled. To improve invariance to highlights and shadows in an image, cells are block normalized, meaning an intensity value is calculated for a larger region of an image called a block and used to contrast normalize all cell-level histograms within each block. The HOG feature vector for the image is the concatenation of these cell-level histograms.

# run HOG using our grayscale bombus image

hog_features, hog_image = hog(gray_bombus,

visualize=True,

block_norm='L2-Hys',

pixels_per_cell=(16, 16))

# show our hog_image with a gray colormap

plt.imshow(hog_image, cmap=mpl.cm.gray)5. Create image features and flatten into a single row

Algorithms require data to be in a format where rows correspond to images and columns correspond to features. This means that all the information for a given image needs to be contained in a single row.

We want to provide our model with the raw pixel values from our images as well as the HOG features we just calculated. To do this, we will write a function called create_features that combines these two sets of features by flattening the three-dimensional array into a one-dimensional (flat) array.

def create_features(img):

# flatten three channel color image

color_features = np.ndarray.flatten(img)

# convert image to grayscale

gray_image = rgb2gray(img)

# get HOG features from grayscale image

hog_features = hog(gray_image, block_norm='L2-Hys', pixels_per_cell=(16, 16))

# combine color and hog features into a single array

flat_features = np.hstack((color_features, hog_features))

return flat_features

bombus_features = create_features(bombus)

# print shape of bombus_features

bombus_features.shape6. Loop over images to preprocess

Above we generated a flattened features array for the bombus image. Now it's time to loop over all of our images. We will create features for each image and then stack the flattened features arrays into a big matrix we can pass into our model.

In the create_feature_matrix function, we'll do the following:

- Load an image

- Generate a row of features using the

create_featuresfunction above - Stack the rows into a features matrix

In the resulting features matrix, rows correspond to images and columns to features.

def create_feature_matrix(label_dataframe):

features_list = []

for img_id in label_dataframe.index:

# load image

img = get_image(img_id)

# get features for image

image_features = create_features(img)

features_list.append(image_features)

# convert list of arrays into a matrix

feature_matrix = np.array(features_list)

return feature_matrix

# run create_feature_matrix on our dataframe of images

feature_matrix = create_feature_matrix(labels)7. Split into train and test sets

Now we need to convert our data into train and test sets. We'll use 70% of images as our training data and test our model on the remaining 30%. Scikit-learn's train_test_split function makes this easy.

# split the data into training and test sets

X_train, X_test, y_train, y_test = train_test_split(feature_matrix,

labels.genus.values,

test_size=.3,

random_state=1234123)

# look at the distribution of labels in the train set

pd.Series(y_train).value_counts()